Else at SXSW

Intro: 8 Themes from SXSW 2026

After a couple of weeks marinading, here are some considered themes from SXSW 2026

By Warren Hutchinson, Founder & Chief Experience Officer

This was my fifteenth SXSW, so I’ve watched enough hype cycles come through Austin to know the difference between noise and signal.

I was there for the blockchains, NFTs, the metaverse. And I’ve seen plenty of ideas arrive with fanfare, only to peter out with a ‘meh’.

But AI feels different.

And, for me, SXSW ‘26 made that clearer than ever.

AI is happening to every organisation.

The question is whether you’re shaping it, or being shaped by it.

This is an intro article to 7 or 8 themes that I’ve synthesised from attending 30+ talks at SXSW. It tees up some background that’s relevant to where we are, and then will be followed by a series of articles that go into the themes in more detail. I’ll be publishing these over the coming weeks.

Why has it taken so long to get together seeing as SXSW was 3 weeks ago?

Well, firstly there is a lot to get your head around and this wasn’t a time for a ‘hot take’.

These things needed a little marinading as I want to unearth the topics that will feel overarching, and key in principle rather than ‘in the moment specifics’.

This area is moving so fast, no one can keep up, so I’m looking for the macro themes, watch outs and considerations.

It took me a little time, the dust needed to settle. the fervour needed to calm down.

Three Years, Three Characters

- 2023: The emotional reaction – ChatGPT had launched a few months earlier and SXSW lost its mind. Every session was about prompts and productivity. Raw excitement, raw anxiety. Nobody had a framework for what was happening.

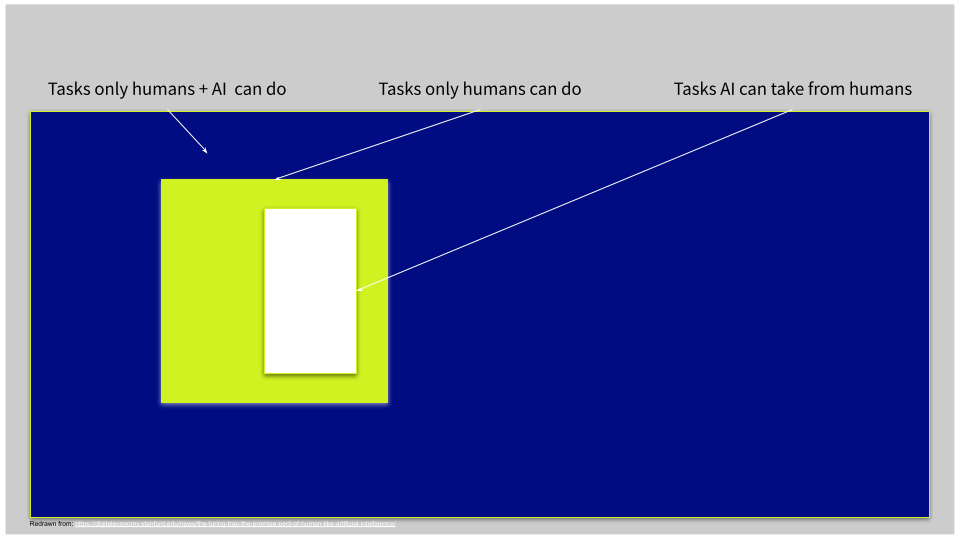

- 2024: Framing – The mood shifted from wow to okay, what do we do with this? An MIT/Stanford article showed a diagram I keep coming back to (see below). The vast blue space is where human and AI capability combine to create entirely new value. The small white rectangle is where most organisations are operating: existing capability, just done faster. Organisations started investing, restructuring, hiring AI leads.

- 2025: Infrastructure – My three takeouts: AI is no longer optional, it’s infrastructural. This era is AI-native, not AI-retrofit. And the next era of design is context-centred, agent-aware, trust-sensitive, and participatory.

- 2026: The emergent AI-class – AI tech has kept accelerating (that’s recursive development for you), but the most interesting talks weren’t about capability. They were about consequence. What AI is doing to our thinking, our organisations, and the structures we’ve built over 150 years.

(Original MIT article ‘the Turing trap)

But, buried in all the things going on, there was a clear divide opening up between people engaging with those consequences and people still treating AI as bolt on.

Too many were pawing at the ‘AI needs humans’, ‘Creativity matters guys!’ and my pet peeve, the ’We’ve seen this before’ types.

This stuff may well turn out to be true, but to me it feels like Hopeium and Copeium.

Note: Cybertrucks are everywhere in Austin.

Are you in the AI Class?

Across 30-odd sessions, most followed a familiar rhythm. Here’s AI. Here’s what it does. Here’s what you should worry about. Here’s where YOU matter!

But a handful of speakers were operating on a different plane entirely.

They weren’t talking about adoption.

They were talking about what happens after adoption.

To your leadership model. Your talent pipeline.

Your cognitive capacity. Your competitive moat.

These talks were AI-native in their thinking.

Not “how do we use AI?” but “what kind of organisation and what kind of human capability does this era require?”

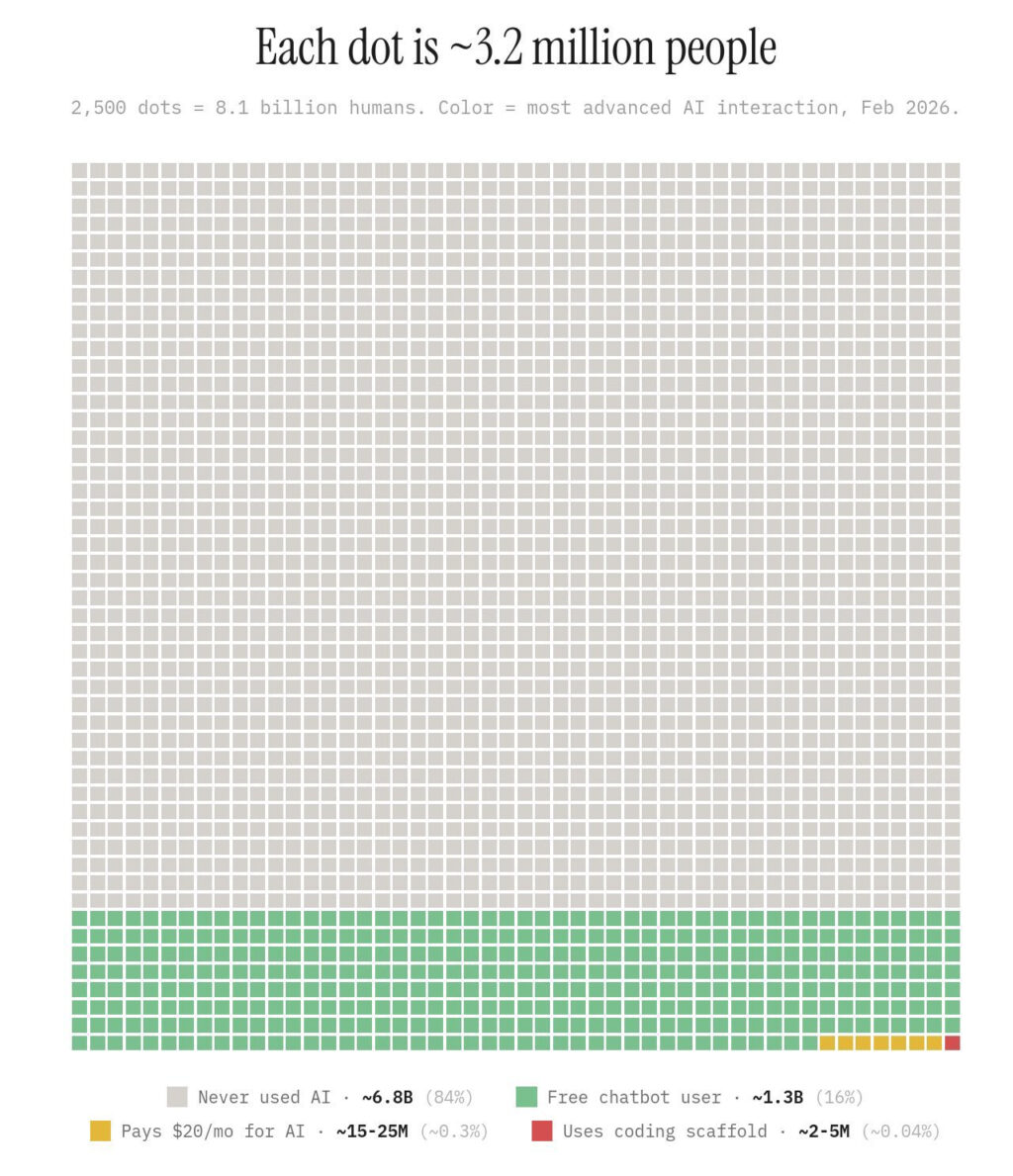

Brian Solis put a number on the gap: power users are operating at 7x the capability of typical users.

The following diagram was shared in Brian’s talk, illustrating the tiny. pocket of advanced AI adoption right now.

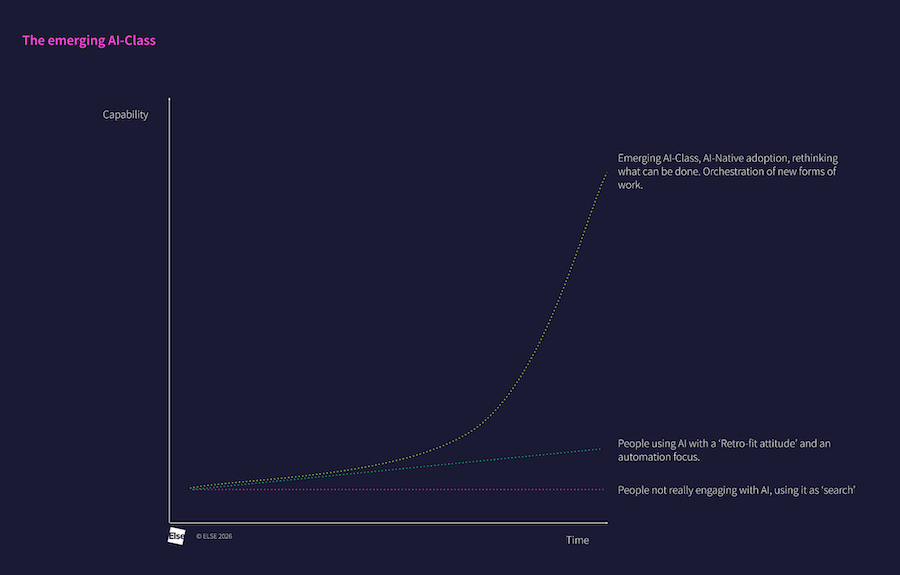

I see three curves diverging.

- People not engaging with AI: flat. In some roles, already sliding down.

- People using AI with a ‘retrofit’ mindset, automating yesterday’s work: gaining incrementally.

- People thinking and designing with AI natively, orchestrating what couldn’t exist before: accelerating away.

You could dismiss this as unrepresentative. A small group of the technology elite talking to each other in Austin. You could keep your head in the sand.

But what if they’re right?

People liken AI to previous technology shifts… “It’s just like when the App Store was created” or “It’s just like when desktop publishing arrived.”

And to some extent, this is reasonable.

But I find it being thrown around like a comfort blanket.

The difference:

a) the speed,

b) the breadth of impact,

c) the incentives.

Trillions of dollars have been sunk into AI. Do you really think they’re after your $20 a month subscription? No. They want to reorganise work. At scale.

If you engage with AI-native thinking and AI turns out to be less transformative than expected, you’ve still improved your organisation.

If you don’t engage and it is transformative, you’re in serious trouble.

The downside of action is marginal.

The downside of inaction could be terminal.

Eight Themes

This article introduces a series of eight themes, derived from the strongest talks at SXSW 2026. Each will exist as its own article for depth and explanation.

- The Efficiency Trap. Most organisations are using AI to do the same work better, faster, cheaper, and when AI gives people time back, they use it to do more of that same work. They’re accelerating an obsolete system.

- Leadership will break in the Agentic Era. When AI executes faster than your organisation can decide, traditional leadership practices and structures won’t be able to keep up.

- Context Is the Only Moat. AI is commodifying every capability that isn’t proprietary. Your context layer is your differentiator, and context engineering becomes brand gold.

- Cognitive Offloading vs Cognitive Surrender. AI can augment your thinking or quietly replace it and the neuroscience evidence is harder than most people realise. Head Work Vs Leg Work, the way you choose to work will be formative, and good ol’ hard work is good for you.

- Trust at Scale Remains Undesigned. Everyone talks about the need for trust in AI systems. I’m seeing companies already spinning it as ‘what they do’. Lots of talk, but little concrete understanding about what it means in practice. To me, this is the near-future for the design industry.

- From UX to AX. When agents do the work, design’s job shifts from execution to orchestration. Everyone becomes a ‘creative director’ with an army of tireless subordinates. Vision, judgement, taste, pattern recognition, experience all matter — hurrah (yes that’s my em-dash), but DANG, the middle gets squeezed. Think of a conductor: the musicians know their parts, they have the score, but the conductor shapes emphasis, dynamics, timing, feel. That’s where design value lives.

- Things Will Break. The Question Is What You Build Next. Underneath the strategic and structural themes runs an emotional current: the human experience of being in the middle of disruption. Multiple speakers acknowledged that the next few years will break economic models, creative practices, and professional identities. The response isn’t denial or acceleration, it’s intentional adaptation.

- The Craft Rewired. The old craft pipeline gave us intuition, taste, and pattern recognition as byproducts. AI is stripping those out. Simultaneously, the floor is dropping on execution and the ceiling is rising on orchestration. What does a designer actually do differently next Monday? This theme gets practical.

Every one of these themes contains a fork.

Which side you end up on is still, for now, a choice.

I’ll be breaking each down over the coming weeks, grounded in specific talks and lived experience. Because these aren’t just SXSW observations.

ELSE has been working on AI projects since 2018, and right now we’re deep inside a couple of genuinely complicated AI transformation programmes.

We’re not commentating from the sidelines.

We’re in the shift.

We’ve been moving ELSE to AI-native for a while now.

Building our own context layer.

Redesigning how we orchestrate human and AI work. Running audits on our own workflows.

Asking ourselves the same questions we ask our clients: are we retrofitting AI into how we already work, or redesigning the work itself?

What does that look like day to day?

People who move between operator mode and architect mode in the same morning.

Who build context layers as naturally as they build wireframes. Who treat curiosity and critical thinking as professional disciplines, because in a world of cognitive surrender, those qualities are what separate the emergent AI-class from everyone else.

We were over 40 people at one point.

That seems crazy to me now.

A small, deliberately assembled team can see further and move faster than headcount ever could, and we take responsibility for the future relevance of our people.

That’s the bet we’re making, because I know which curve I want to be on.

This is the first in a series from ELSE’s attendance at SXSW 2026, if you want the subsequent articles in your inbox, sign-up below. We don’t spam, in fact, we’re far more infrequent that we’d like.

Else briefing

Don’t miss strategic thinking for change agents

One more step

Thanks for signing up to Else insight

We’ll only email you when we release an update.

Please check your email for a confirmation link to complete the sign up.

You’re all set

Ah! You’re already a friend of Else

You won’t miss any of our updates.

In the meantime, please enjoy Our Insights

Shared perspectives

Don’t miss strategic thinking for change agents

Find ideas at your fingertips with Else insight.

Here to help

Have a question or problem to solve?

“If you engage with AI-native thinking and AI turns out to be less transformative than expected, you’ve still improved your organisation.

If you don’t engage and it is transformative, you’re in serious trouble.”

The downside of action is marginal.

The downside of inaction could be terminal.